Processing Kinesis Data Streams with Spark Streaming

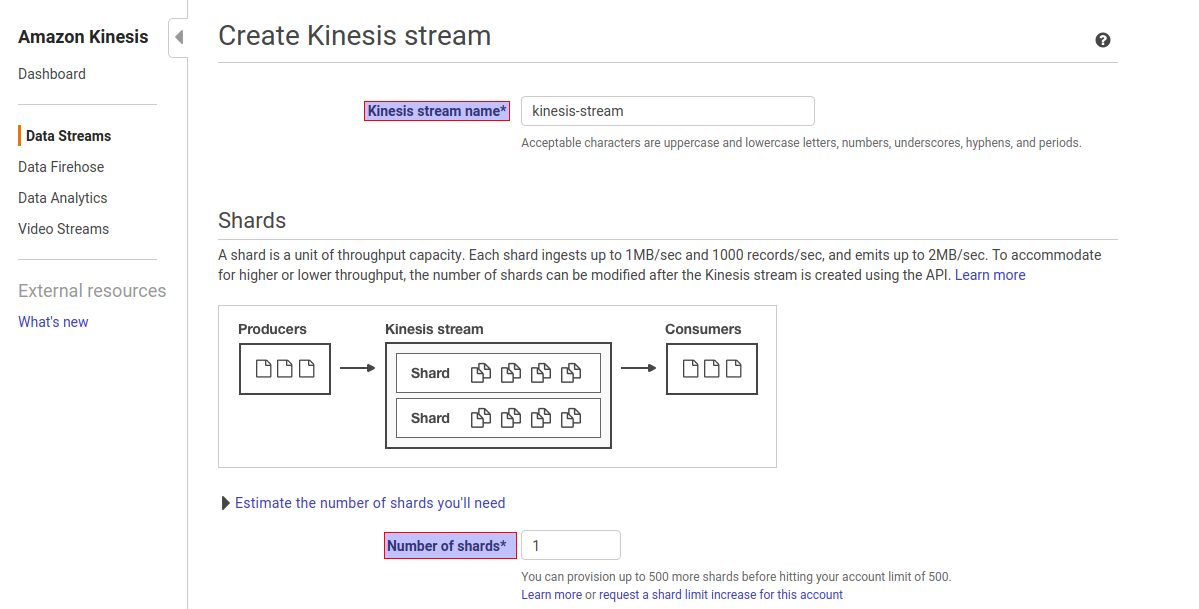

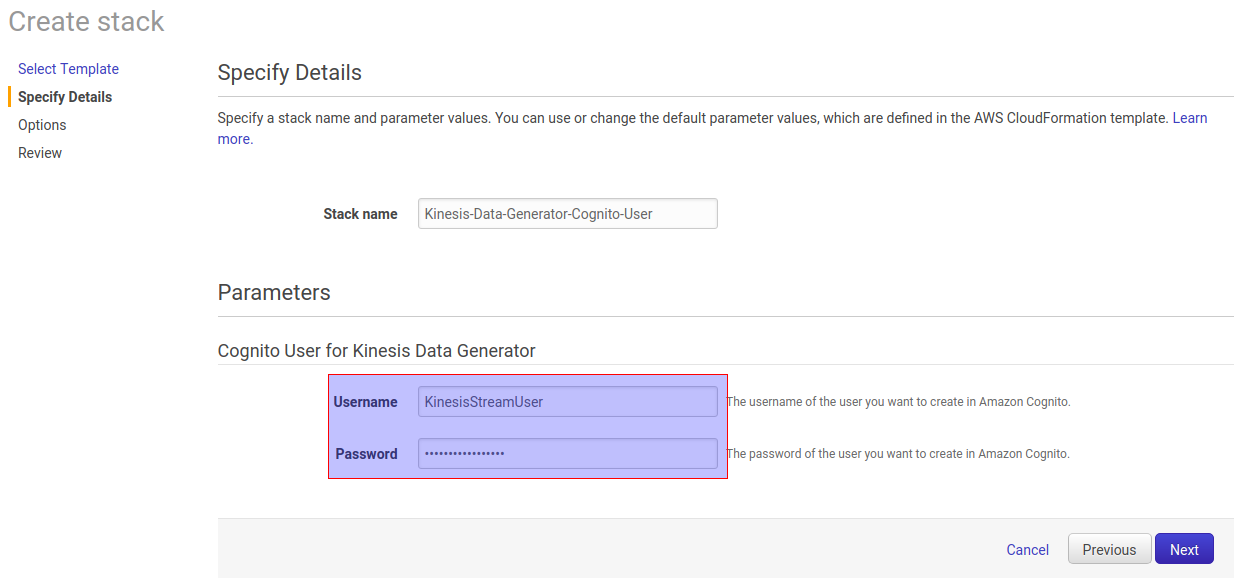

Step1. Go to Amazon Kinesis console -> click on Create Data Stream Step2. Give Kinesis Stream Name and Number of shards as per volume of the incoming data. In this case, Kinesis stream name as kinesis-stream and number of shards are 1. Shards in Kinesis Data Streams A shard is a uniquely identified sequence of data records in a stream.

Spark Streaming with Kafka Example Spark By {Examples}

Apache Spark version 2.0 introduced the first version of the Structured Streaming API which enables developers to create end-to-end fault tolerant streaming jobs. Although the Structured.

Kinesis and Spark Streaming Advanced AWS Meetup August 2014

Spark Structured Streaming is a high-level API built on Apache Spark that simplifies the development of scalable, fault-tolerant, and real-time data processing applications. By seamlessly.

Spark Streaming Architecture, Working and Operations TechVidvan

Here we explain how to configure Spark Streaming to receive data from Kinesis. Configuring Kinesis A Kinesis stream can be set up at one of the valid Kinesis endpoints with 1 or more shards per the following guide. Configuring Spark Streaming Application

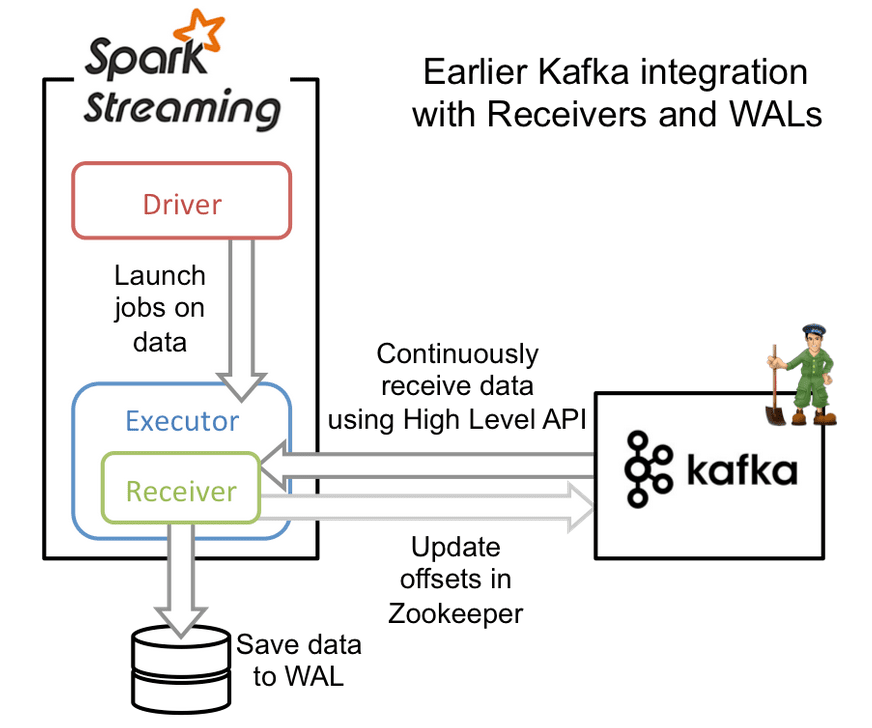

Improvements to Kafka integration of Spark Streaming Databricks Blog

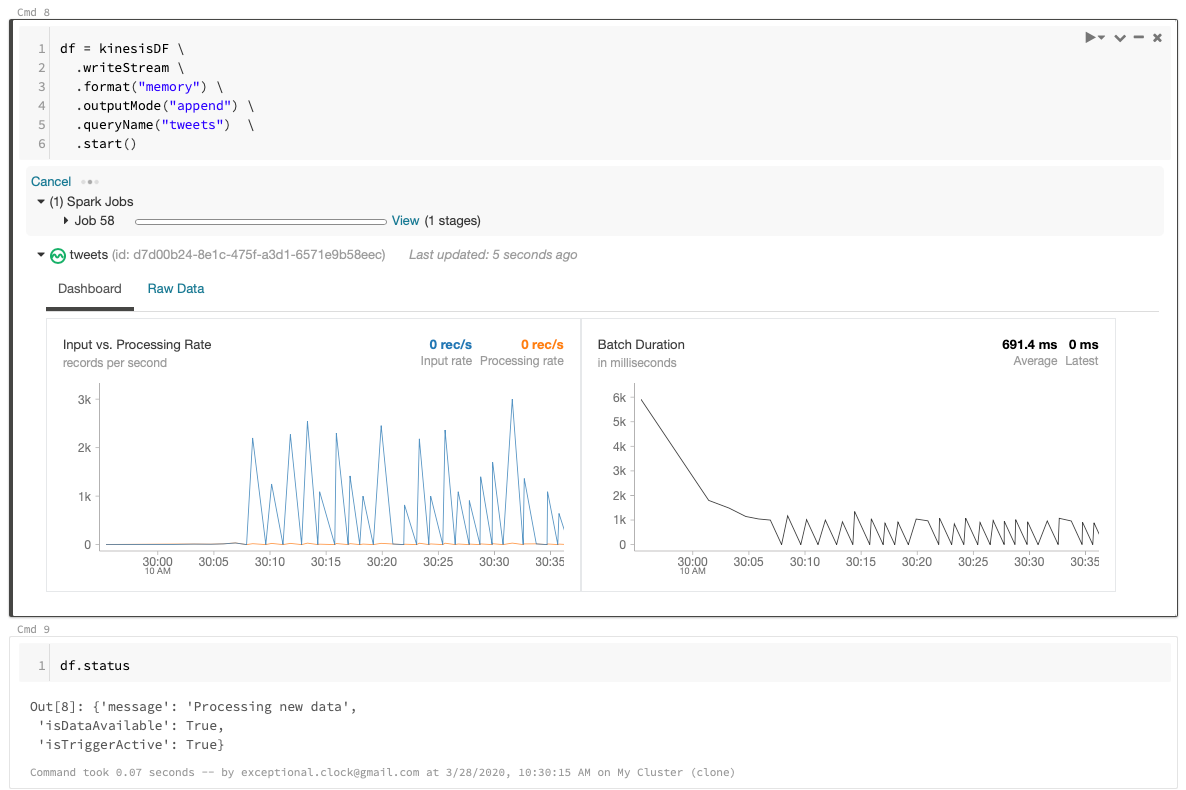

Apache Spark's Structured Streaming with Amazon Kinesis on Databricks by Jules Damji August 9, 2017 in Company Blog Share this post On July 11, 2017, we announced the general availability of Apache Spark 2.2.0 as part of Databricks Runtime 3.0 (DBR) for the Unified Analytics Platform.

Spark Streaming, Kinesis, and EMR Pain Points by Chris Clouten disneystreaming

This article describes best practices when using Kinesis as a streaming source with Delta Lake and Apache Spark Structured Streaming. Amazon Kinesis Data Streams (KDS) is a massively scalable and durable real-time data streaming service. KDS continuously captures gigabytes of data per second from hundreds of thousands of sources such as website.

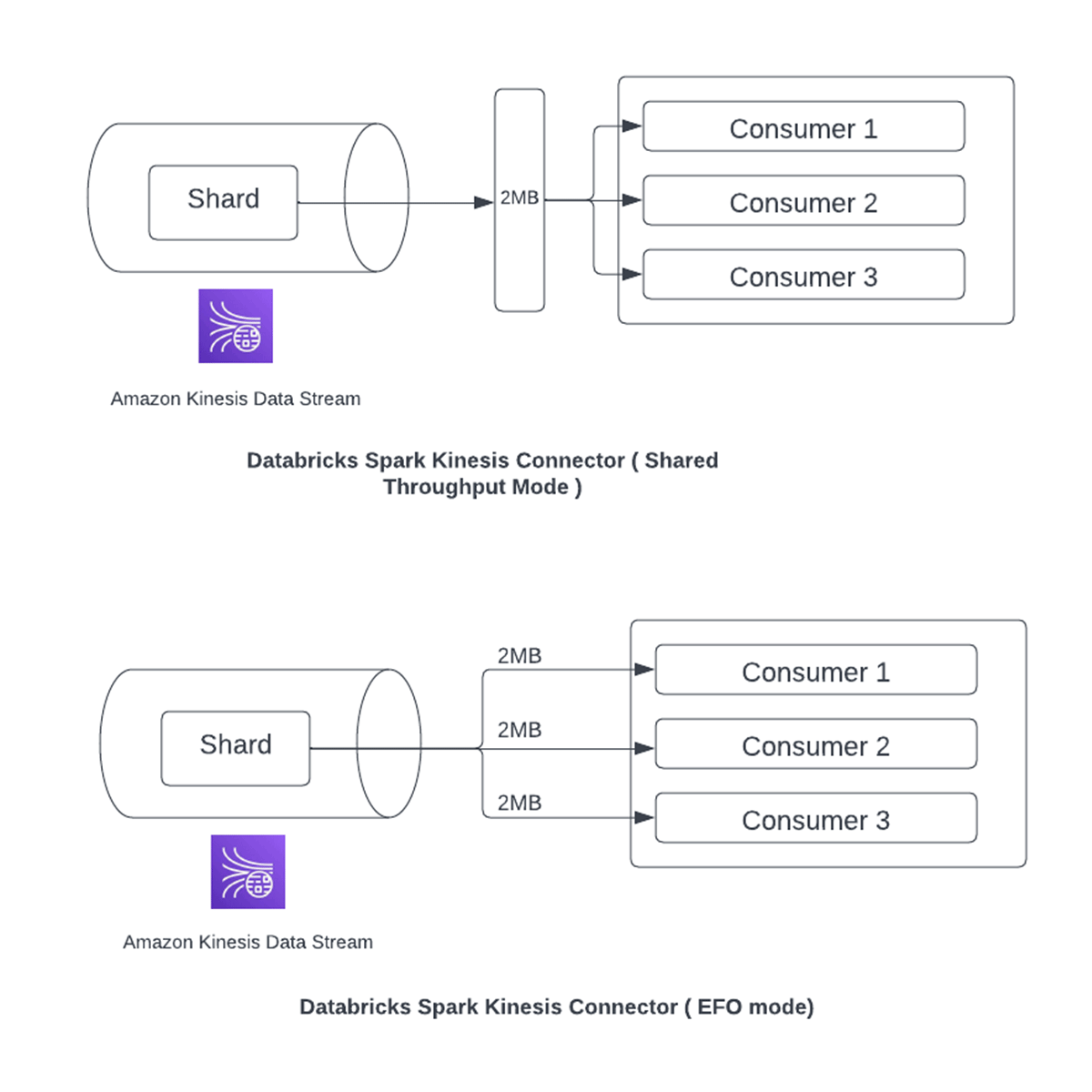

Simplify Streaming Infrastructure With Enhanced FanOut Support for Kinesis Data Streams in

This Spark Streaming with Kinesis tutorial intends to help you become better at integrating the two. In this tutorial, we'll examine some custom Spark Kinesis code and also show a screencast of running it. In addition, we're going to cover running, configuring, sending sample data and AWS setup.

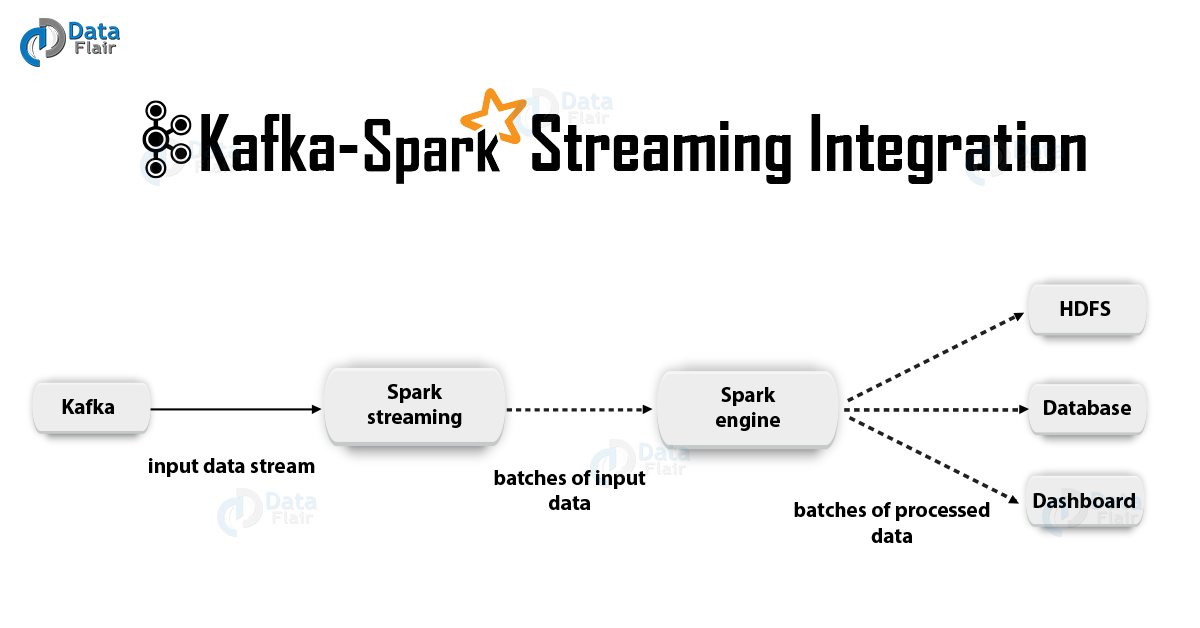

Apache Kafka + Spark Streaming Integration DataFlair

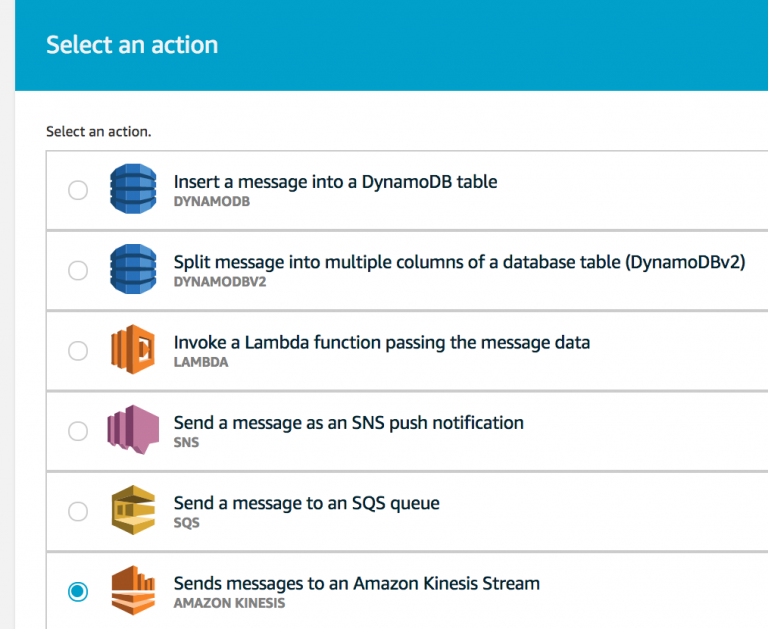

For more information, see Example: Read From a Kinesis Stream in a Different Account. AWS Glue streaming ETL jobs can auto-detect compressed data, transparently decompress the streaming data, perform the usual transformations on the input source, and load to the output store.. Choose Spark streaming.

An Introduction to Spark Streaming by Harshit Agarwal Medium

Spark Structured Stream - Kinesis as Data Source Ask Question Asked 1 year, 9 months ago Modified 7 months ago Viewed 860 times Part of AWS Collective 4 I am trying to consume kinesis data stream records using psypark structured stream. I am trying to run this code in aws glue batch job.

Spark Streaming Different Output modes explained Spark By {Examples}

I modified this example and used my own values for "app-name", "stream-name" and "endpoint-url". I have placed various print lines within my code. When running the job using the cmd "spark-submit" I fail to see any print lines in the stdout logs. Can someone please explain to me where I can find the system out print lines.

Stateful Transformations in Spark Streaming TechVidvan

PDF RSS Apache Spark is a unified analytics engine for large-scale data processing. It provides high-level APIs in Java, Scala, Python and R, and an optimized engine that supports general execution graphs. For more information on consuming Kinesis Data Streams using Spark Streaming, see Spark Streaming + Kinesis Integration.

IoT with Amazon Kinesis and Spark Streaming on Qubole

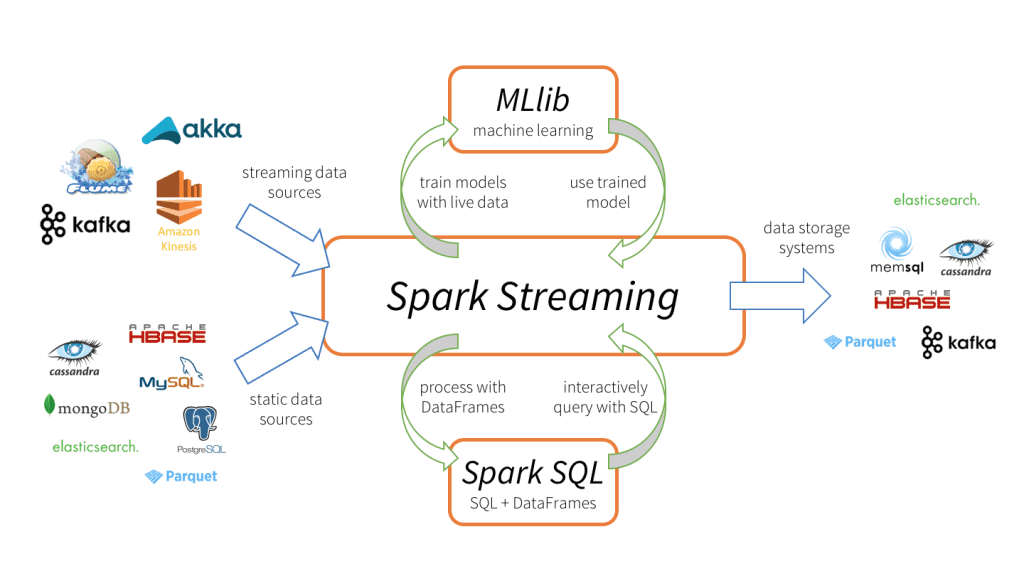

Spark Streaming receives live input data streams and divides the data into batches, which are then processed by the Spark engine to generate the final stream of batched results. For more information, see the Spark Streaming Programming Guide.

O que é o Spark Streaming e o que ele oferece? Alura

Spark Streaming is the previous generation of Spark's streaming engine. There are no longer updates to Spark Streaming and it's a legacy project. There is a newer and easier to use streaming engine in Spark called Structured Streaming. You should use Spark Structured Streaming for your streaming applications and pipelines.

Optimize SparkStreaming to Efficiently Process Amazon Kinesis Streams AWS Big Data Blog

Apache Spark Streaming is a scalable, high-throughput, fault-tolerant streaming processing system that supports both batch and streaming workloads. It is an extension of the core Spark API to process real-time data from sources like Kafka, Flume, and Amazon Kinesis to name a few.

Processing Kinesis Data Streams with Spark Streaming

Here we explain how to configure Spark Streaming to receive data from Kinesis. Configuring Kinesis A Kinesis stream can be set up at one of the valid Kinesis endpoints with 1 or more shards per the following guide. Configuring Spark Streaming Application

Streaming twitter analysis Spark & Kinesis Towards Data Science

Feb 26, 2021 -- This tutorial describes a real time analytics frame work using spark streaming and window functions on AWS real time streaming application Kinesis. Amazon Kinesis Data.